Home » Posts tagged 'Linux'

Tag Archives: Linux

Building My Linux Box…The Implementation

In an earlier post, I outlined my plan for building a Linux Box. Here I will post how that plan was ultimately implemented. Life has taught me that all good planning is ultimately undone, and at some point, you must improvise. That has also proven true for this quest to up grade my computation. Specifically:

- After ordering all the hardware, it came to me that it was dumb to attempt to reuse my old semi-reliable, slow CD drive. So I purchase the HP 24X Multiformat DVD/CD Writer (dvd1260i) at Best Buy for $40.

- I discovered that the my old PC had ATA hard drives (commonly called an IDE drive) and my new Mobo only supports SATA (SATA 3Gb/s & 6GB/s and it includes an external eSATA port). This blows my plan to reuse my existing hard drives … stupid me, I should have checked. I did a quick scan for ATA controller cards and found a few (not many) for $15 to $30. I could buy the card and make this work but it doesn’t seem like a good investment. The drives are 400G drives and has a maximum data transfer rate of about 133MB/s (i.e. ATA/66). The maximum data transfer rates of SATA II and SATA III are 300 MB/s and 600 MB/s, respectively. I can buy a Seagate – Barracuda 500GB SATA II Internal Hard Drive for $70. Given my objective to increase the performance of my computing experience, buying a new SATA III hard drive should have been part of the original plan.

- After reading up on RAID and the Intel Rapid Storage Technology (RST), I concluded that it would be best to do a Software Raid and not use RST.

- While I assumed in my original plan that I would dual boot the box with Linux and MS Windows, the comment from armahillo convinced me of what I suspected I should do; and that was to make wine, mono, and PlayOnLinux work for me. While I haven’t stressed them, so far so good. I have not installed VirtualBox and I suspect I will not … unless I get desperate.

- I was planning to reuse my old keyboard and mouse, but you know, I hated that keyboard and the mouse was already acting badly and about to die on me. So I ended up replacing them with sometime worthy of my new system.

Configuring Ubuntu

You’ll find many recommendations on how to jazz up a newlly install Ubuntu system. I found some of these suggestions useful:

Installing “dot” Files

Like may, over the years I have made a large time investment in tuning my .bashrc, .vimrc, and other such resource files. I installed my beloved “dot” files from my Git repository.

Installing Google Chrome’s Native PDF Reader in Chromium

Chromium is a fully open-source version of Google’s Chrome, and for licensing reasons, it doesn’t come packaged with the integrated Flash or a native PDF reader. Lucky, there is a work around and that is documented here: Use Google Chrome’s Native PDF reader in Chromium.

Installing My Squeeze Box

I have a SqueezeBox device in my workshop for playing music. On my old PC, I had installed the SlimServer which would provide the music stream. I want to now reestablish that capability on the Linux box. The post How to Use Squeezebox With Ubuntu and the Logitech SqueezeBox Wiki gives you all the information you should need.

The Ubuntu (Debian) software for the SqueezeCenter or now called the Logitech Media Server (formerly known as SlimServer) is maintained by Logitech, and therefore, will not be installed via get-apt. To make it part of the package resource list (used to locate archives of the package distribution system in use on the system), you need to update the sources.list file. To do this, do the following:

sudo vim /etc/apt/sources.list

Scroll to the bottom of the file and enter the following information and then save:

## This software is not part of Ubuntu, but is offered by Logitech for the Logitech Media Server (formerly known as SqueezeCenter or SlimServer).

deb http://debian.slimdevices.com stable main

Now do the following:

sudo apt-get remove --purge logitechmediaserver

sudo apt-get update

sudo apt-get install logitechmediaserver

Now open a browser and type “http://desktop:9000” as the URL, where “desktop” is the name of your Linux system. This brings you to a Squeezebox interface to configure the system.

If you want to start/stop Logitech Media Server manually you can run:

sudo /etc/init.d/logitechmediaserver stop

and

sudo /etc/init.d/logitechmediaserver start

Setting-Up Harmony Remote

I have the Logitech Harmony 650 Universal Remote Control for my home theater system. To program the device, it must be tethered to a web site via a Windows or Mac PC. The Harmony web site does reference some Linux support done by others. The posting “How to set up a Harmony remote using Linux” and “Logitech Harmony Universal Remote Linux Software Support” give you the basics of what you need to do. Within these sites you lean about the utilities concordance and congruity. The first utility provides most of the functionality of the Windows software provided by Logitech and the second is a GUI application for programming Logitech Harmony remote using concordance. To install these utilities, do the following:

sudo apt-get install concordance

sudo apt-get install congruity

You also have to configure the web browser to ask what to do with download links. It is best to use Firefox and you can configure downloads via: Edit->Preferences->General->Downloads->Always ask me where to save files.

Now plug the remote into the PC using the provided cable, and enter the command: sudo concordance -i -v. You should get a bunch of data and the word “Success”, verifying that you can talk to the device.

Now go through the following process:

- Plug in the remote via the provided USB cable.

- Visit the Harmony Remote’s member site URL. This appears to be a legacy support site and Logitech site listed in the documentation with the device will not work under Linux.

- Create an account or login into your existing account.

- Skip/ignore the “you need to update your software” steps, and eventually a download prompt appears.

- Choosing ‘open’ rather than ‘save’ impressively results in the Congruity graphical setup up launching.

- Step through the setup boxes as prompted.

Setting-Up Keyboard & Mouse

Logitech Wireless Illuminated Keyboard K800 To improve my driving experience, I purchased the

and the Logitech M510 Wireless Mouse

and the Logitech M510 Wireless Mouse . These devices use the Logitech Unifying wireless technology, which allows a single wireless receiver to connect with multiple Unifying devices. I plugged in the mouse’s receiver and in short order the mouse was working. I was a bit concerned about the ability of the receiver to support multiple device (i.e. the keyboard) simultaneously under Linux. Doing a quick search I found a post discussing how to do the device pairing under Linux. To install the

. These devices use the Logitech Unifying wireless technology, which allows a single wireless receiver to connect with multiple Unifying devices. I plugged in the mouse’s receiver and in short order the mouse was working. I was a bit concerned about the ability of the receiver to support multiple device (i.e. the keyboard) simultaneously under Linux. Doing a quick search I found a post discussing how to do the device pairing under Linux. To install the ltunify pairing software, and do the pairing, do the following:

cd ~/src

git clone https://git.lekensteyn.nl/ltunify.git

cd ltunify

make install-home

To list the devices that are paired: sudo ltunify list

To pair a device: sudo ltunify pair, then turn your wireless device off and on to start pairing.

To unpair a device: sudo ltunify unpair mouse

To get help: sudo ltunity --help

Mouse Xbindkeys

The M510 mouse has extra buttons on its left side and the scroll wheel has a side-to-side click, but out of the box,the don’t do anything under Linux. It would be nice to make use of these extra buttons. To address this problem, I found pointers in these posts: How to get all those extra mouse buttons to work, How do I remap certain keys, Mouse shortcuts with xbindkeys, and Guide for setup Performance MX mouse on Linux (with KDE).

Basically, using Xbindkeys, I want to map the mouse buttons with desired actions. I want to setup the M510 mouse extra buttons as follows:

- Left-side Button: up mapped to Page Up and down mapped to Page Down

- Scroll Wheel: move left mapped to Copy and move right mapped to Paste

- Scroll Wheel: press mapped to Paste

xev prints the contents of X events by creating a window and then asks the X Server to send it events whenever anything happens to the window. It’s sort of a keyboard and mouse events sniffer. If we know what event name the X Server gives to our buttons, then we can remap them. Using this program, we find out the following:

- Left-side Button: up is known by the X Server as button 9

- Left-side Button: down is known by the X Server as button 8

- Scroll Wheel: move left is known by the X Server as button 6

- Scroll Wheel: move right is known by the X Server as button 7

- Scroll Wheel: press is known by the X Server as button 2

Now we need a mechanism to re-map mouse (or keyboard) button inputs. Xbindkeys is is a X Windows program that enables us to bind commands to certain keys or key combinations on the keyboard and it will also work for the mouse. The file ~/.xbindkeysrc is what xbindkeys uses as a configuration file to link a command to a key/button on your keyboard/mouse. There is also xbindkeys_config is an easy to use gtk program for configuring xbindkeys. To install these tools, do the following:

sudo apt-get install xautomation xbindkeys xbindkeys-config

To create your initial xbindkeys configuration file, just run the following command:

xbindkeys --defaults > $HOME/.xbindkeysrc

The syntax of the contents of .xbindskesrc is simple and is illustrated below:

# short comment

"command to start"

associated key

The "command to start" is simply a shell command (that you can run from a terminal), and "associated key" is the key or button.

Now, using an editor, update the .xbindkeysrc file to include the following:

# Do a Page Down when mouse left-side down button is pressed

"xte 'key Page_Down'"

b:8

# Do a Page Up when mouse left-side up button is pressed

"xte 'key Page_Up'"

b:9

# Move scroll wheel to the left to copy text

"xte 'keydown Control_L' 'keydown Shift_L' 'key c' 'keyup Control_L' 'keyup Shift_L'"

b:6

# Move scroll wheel to the right to paste text

"xte 'keydown Control_L' 'keydown Shift_L' 'key v' 'keyup Control_L' 'keyup Shift_L'"

b:7

# Press scroll wheel to paste text

"xte 'keydown Control_L' 'keydown Shift_L' 'key v' 'keyup Control_L' 'keyup Shift_L'"

b:2

To activate any modification of the .xbindkeysrc configuration file, your have to restart xbindkeys. This can be done via:

pkill xbindkeys

xbindkeys

Other useful resources are:

xteis a program that generates fake input using the XTest extensionxvkbdis a virtual (graphical) keyboard program for X Window System which provides facility to enter characters onto other X clients by clicking on a keyboard displayed on the screen.xbindkeys_showis a program to show the grabbing keys used in xbindkeysxmodmapis a utility for modifying keymaps and pointer button mappings in Xorg.

Moving from SplashID to KeePass

I have been using the MS Windows based SplashID to store passwords, credit cards, account numbers, etc. securely on my PC and cell phone. I got it to work under Wine but I’m considering Linux alternatives. I’m growing tired of purchasing SplashID licenses and the user interface looks like it was designed in the 1970’s. I came across “Five Best Password Managers” which gave me the incentive to check out KeePass. KeePass is a cross platform, open source password manager. It is extendable via its plugin framework, where additional functionality can be added. It looks like I can use Dropbox and KeePassDroid to get the data on my cell phone. I found these sites useful to get the job done:

The first step was to get KeePass installed in Ubuntu. I found it on the Ubuntu Software Center or you can use:

sudo apt-get install keepass2 keepass2-doc

I then exported the contents of my SplashID database to a CSV file and imported it into keepass2. I set up the KeePass2 database within my Dropbox folder. This way, it can be scych’ed with my cell phone. I then installed KeePassDroid on my cell phone, pointing it at the database with the cell phones Dropbox. KeePassDroid is a port of the KeePass password safe for the Android platform.

There is some cleanup of the fields within the KeePass2 database, but the data is now accessable on both my PC and my cell phone.

Installing Wine

Wine allows you to run many Windows programs on Linux. Instead of simulating internal Windows logic like a virtual machine or emulator, Wine translates Windows API calls into Linux calls. I used the following to install Wine:

sudo add-apt-repository ppa:ubuntu-wine/ppa

sudo apt-get update

sudo apt-get install wine

Using Wine on Windows programs can be as simple or complex, it all depends on the program. Ubuntu provides some guidance on how to use Wine, also check out Wine Documentation and Wine HowTo.

As of this writing of the post, the only thing I loaded via Wine was SplashID, which worked without any challenges.

Installing PlayOnLinux

PlayOnLinux is based on Wine, and so profits from all its features, yet it keeps the user from having to deal with all its complexity. I also install this package, in part because it comes pre-configured to load some popular tools. I used it to install Internet Explore (sometimes its the only browser you can get to work on a site), and Kindle.

Installing RAID

RAID is an acronym for Redundant Array of Independent Disks and RAID as the first tier in your data protection strategy. It uses multiple hard disks storing the same data to protect against some degree of physical disk failure. The amount of protection it affords depends upon the type of RAID used. I’m not going to go into all the types of RAID, nor their benefits, but you can find much of this information, and much more, in the following links:

In my case, I had an existing disk drive (non-RAID), loaded with data, and I wanted to add an additional drive to make it a RAID. This presents some challenges since you’re attempting to preserve the data. In this regard, I found the following links helpful:

I had already installed one SSD and one HHD disk drives in my system. I then installed a third drive that matched the HHD drive. My 128GB SSD has the device name of /dev/sda1 and mounted as /boot. The currently installed 1TB SATA HDD has the device name of /dev/sdb1 and mounted as /home. The newly install 1TB SATA HDD has the device name of /dev/sdc.

Description of the procedures I used to create the RAID is as follows:

- Physically install the additional hard drive.

- Install mdadm, which is the Linux utility used to manage software RAID devices.

sudo apt-get install mdadm

- Partition the newly installed disk. Use the following inputs: n to establish a logic partition, p to make it a primary partition, 1 should be the partition number, use the same sectors as the currently installed drive, t to set the partition type, fd hex code type, p to print what the partition table will look like, w to write all of the changes to disk.

sudo fdisk /dev/sdc

- Create a single-disk RAID-1 array (aka degraded array) with the existing hard drive. (Note the “missing” keyword is specified as one of the devices. We are going to fill this missing device later with the new drive.)

sudo mdadm --create /dev/md0 --level=1 --raid-devices=2 missing /dev/sdc1

- At this point, if you do a

sudo fdisk -l | grep '^Disk', you see a new disk, that being/dev/md0. This is the RAID but not yet fully created. - Make the file system (ext3 type like the currently installed hard drive) on the RAID device.

sudo mkfs -t ext3 -j -L RAID-ONE /dev/md0

- Make a mount point for the RAID and mount the device.

sudo mkdir /mnt/raid1

sudo mount /dev/md0 /mnt/raid1

- Copy over the files form the original hard drive to the new hard drive using rsync.

sudo rsync -avxHAXS --delete --progress /home/* /mnt/raid1

- Just in case of a disaster, copy the original hard drive to the SSD

/dev/sda1root file system as /home_backup.

sudo rsync -avxHAXS --delete --progress /home /home_backup

- After the copy, to see the status of the RAID, use the command

sudo mdadm --detail /dev/md0. What you see is that the/dev/sdc1drives is in “active sync ” state but no reference to the other drive. When you docat /proc/mdstatyou see “md0 : active raid1 sdc1[1]” but again no reference to the other drive. - Edit your

/etc/fstabfile and edit all references of/dev/sdb1to/dev/md0and reboot the system. With this,/dev/md0will be used as/homeon the next boot. This will free up the existing hard drive so it can be made ready for the RAID. - With fdisk, re-partition /dev/sdb1 with a partition of type fd. Use the following inputs: n to establish a logic partition, p to make it a primary partition, 1 should be the partition number, use the same sectors as the currently installed drive, t to set the partition type, fd hex code type, p to print what the partition table will look like, w to write all of the changes to disk.

sudo fdisk /dev/sdb1

- Add

/dev/sdb1to your existing RAID array.

mdadm /dev/md0 --add /dev/sdb1

- The RAID array will now start to rebuild so that the two drives have the same data. Use the following command to check the status of the rebuild.

sudo mdadm -D /dev/md0

- For Ubuntu, it seems it is also necessary to update the

/etc/mdadm/mdadm.conffile. If this is not done, the RAID device will not be mounted when you reboot the system. The solution is to run the following command on your system, once the RAID drive has been configured:

sudo cp /etc/mdadm/mdadm.conf /etc/mdadm/mdadm.conf_backup

sudo mdadm --detail --scan >> /etc/mdadm/mdadm.conf

Linux Reboot … or … My System is Frozen and I Can’t Get Up!

While I was building the environment for my Linux box, I ran into some problems with Ubuntu. I found myself with frozen screens or booted up into blank screens and other such things. Seems that Ubuntu 13.04 is currently a bit unstable or I just screwed things up badly … a little of both I suspect. I managed to get through these problems, but too often I got desperate and I hitting the on/off switch to get the box rebooted. Doing this can result in corrupted files and other such nasty things. So this motivated me to research the “correct” way to get out of these Linux near death experiences.

While I was building the environment for my Linux box, I ran into some problems with Ubuntu. I found myself with frozen screens or booted up into blank screens and other such things. Seems that Ubuntu 13.04 is currently a bit unstable or I just screwed things up badly … a little of both I suspect. I managed to get through these problems, but too often I got desperate and I hitting the on/off switch to get the box rebooted. Doing this can result in corrupted files and other such nasty things. So this motivated me to research the “correct” way to get out of these Linux near death experiences.

The golden nugget here is actually at the end of this posting, that is the Magic SysRq Key. This gem can get you out of most any freeze but I provide more here since the alternatives might be less intrusive.

Stopping a Running Process

While this post’s focus is on how to reboot Linux, keep in mind that sometimes the problem that your attempting to solve may be handled via a simpler approach. Specifically, maybe you just need to kill a running process.

In the Bash shell, you can use job control:

- Ctrl+C – halts the current command

- Ctrl+Z – stops the current command, resume with fg in the foreground or bg in the background

- Ctrl+D – log out of current session, similar to exit

On a Desktop version of Linux, there are normally six text consoles sessions available and one graphics session. You could leave a frozen GUI, and go to a console screen to kill an offending process:

- You can access the consoles by pressing CTRL + ALT + Fx (where Fx is a function key on the keyboard from F1 to F6).

- Once you enter one of the consoles, you will be prompted for user name and password. Enter them and then you’ll reach a command prompt. Now you can kill the offending process using the kill command.

- To switch back to the graphic session, just click CTRL + ALT + F7.

The process “killing” could be done via the recommended kill -SIGTERM pid or the more forceful kill -SIGKILL pid. There are also versions of these tools, killall and pkill, that use the name of the process as an argument instead of the process ID.

xkill is a utility for forcing the X server to close connections to clients. Closing an X application that has become unresponsive, or misbehaving in general. Execute xkill, and once it’s running, you simply click on the window you wish to kill, and it performs a kill -9.

When Kill -9 Does Not Work

You are supposed to be able to kill any process with kill -9 pid, but you may come across a process that just will not die. Usually this happens when you are trying to kill a zombie process or defunct process. These are processes that are dead and have exited, but they remain as zombies in the process list. The kernel keeps them in the process list until the parent process retrieves the exit status code by calling the wait() system call. This does not usually happen with daemon processes because they detach themselves from their parent process and are adopted by the init process (PID=1) which will automatically call wait() to clear them out of the process list.

You may sometimes see the daemon defunct PID in the process list for a brief moment before it gets cleaned up by the init process. You don’t have to worry about these. You can also end up with an unkillable process if a process is stuck waiting for the kernel to finish something. This usually happens when the kernel is waiting for I/O. Where you see this most often is with network filesystems such as NFS and SaMBa that have disconnected uncleanly. This also happens when a drive fails or if someone unplugs a cable to a mounted drive. If the device had a memmapped file or was used for swap then you may be really screwed. Any kernel calls that flush IO may hang forever waiting for the device to respond.

Zombies can be identified in the output from the process status command ps by the presence of a “Z” in the “STAT” column. Zombies that exist for more than a short period of time typically indicate a bug in the parent program, or just an uncommon decision to reap children. A zombie process is not the same as an orphan process. An orphan process is a process that is still executing, but whose parent has died. They do not become zombie processes; instead, they are adopted by init (process ID 1), which waits on its children.

Standard Reboot Commands

The vast majority of your systm shut downs or reboots will follow one of these two forms. The first will halt the system so you can power it off and the second will reboot the system:

shutdown -h now

shutdown -r now

shutdown is the preferred and the safest ways to stop Linux. Never the less, in the tradition of Unix, Linux gives you more than one way to accomplish this task. reboot, halt, and poweroff are some additional commands that Linux provides to stop the system. These commands can be more “forceful” than shutdown but similar to it.

The simplicity of these commands give the illusion that the system state of Linux is running or not running. It isn’t quite that simple. Linux can operate in several states called runlevels.

Linux Runlevels

A runlevel is a preset operating state for the Linux operating system. A system can be booted into any of several runlevels, each of which is represented by a single digit integer. Each runlevel designates a different system configuration and allows access to a different combination of processes and system resources.

Seven runlevels are supported in the standard Linux kernel. They are:

- 0 – System Halted: all system activity is stopped, the system can be safely powered down

- 1 – Single User: single superuser is the only active login and no daemons (services) are started. It is mainly used for maintenance.

- 2 – Multiple Users: multiple users allowed but network filesystem (HFS) is not.

- 3 – Multiple Users: multiple users are allowed command line (i.e., all-text mode); the standard runlevel for most servers

- 4 – User-Definable

- 5 – Multiple Users: multiple users are allowed graphical user interface; the standard runlevel for most desktop systems

- 6 – Reboot: just like run level 0 except a reboot is issued, used when restarting the system

In the interests of completeness, there is also a runlevel ‘S’ that the system uses on it’s way to another runlevel. Read the man page for the init command for more information, but you can safely skip this for all practical purposes.

By default Linux boots either to runlevel 3 or to runlevel 5. The former permits the system to run all services except for a GUI. The latter allows all services including a GUI.

Booting into a different runlevel can help solve certain problems. For example, if a change made in the X Window System configuration on a machine that has been set up to boot into a GUI has rendered the system unusable, it is possible to temporarily boot into a console (i.e., all-text mode) runlevel (i.e., runlevels 3 or 1) in order to repair the error and then reboot into the GUI. Likewise, if a machine will not boot due to a damaged configuration file or will not allow logging-in because of a corrupted /etc/passwd file or because of a forgotten password, the problem can solved by first booting into single-user mode (i.e. runlevel 1).

There are two commands for directly reading or manipulating the Linux rumlevels:

runlevel– Use the runlevel command to tell you two things: The last run level, and the current run level. If the first character is ‘N’, it stands for none, meaning there has been no run level change since powering up.telinit– The program responsible for altering the runlevel is init, and it can be called using the telinit command.

The topic of runlevels is actually much richer than what is illustrated here. It plays a key role in Linux background processes, called services or daemons. For more, check out this Managing Services in Ubuntu, Part I: An Introduction to Runlevels and Part II.

Getting a Login From a Frozen GUI Screen

A not so common problem is when a frozen, full screen X application takes control over your mouse and keyboard and it seems that the only way to regain access to the system is to force a shutdown. The fact is, if you could get to a some form of terminal session, you might be able to kill the offending process and get out of this frozen state. This is where accessing a console screen by pressing CTRL + ALT + Fx (where Fx is a function key on the keyboard from F1 to F6) can be very handy.

The X Server can often be the source of these frozen screen situations, so restarting the X Server may be the solution. This can be done via the key combination Ctrl+Alt+Backspace. Keep in mind that you will loses any unsaved data in applications. Also, this capability is turned off by default on may Linux systems (including Ubuntu). This was done to avoid confusion by people accustom to MS Windows. An alternative key combination is as follows:

Press AltGR + SysRQ + K instead (AltGR is the RIGHT ALT button and SysRQ is labelled “Print Screen” most of the times, and remember to press and hold the keys in the in the right sequence, e.g. starting with ALtGR, and ending with the K(ill) key).

You can turn back on the Ctrl+Alt+Backspace by following the instructions here.

Magic SysRq Key

If the system is completely locks up, or your filesystem fails, there are still alternatives. The “magic SysRq key” provides a way to send commands directly to the kernel through the /proc filesystem. It is enabled via a kernel compile time option, CONFIG_MAGIC_SYSRQ, which seems to be standard on most distributions.The magic SysRq key (or PrintScrn or Print Screen on some keyboards) is a key combination understood by the Linux kernel, which allows the user to perform various low-level commands regardless of the system’s state. This is a surprising feature of the kernel but is commonly used to perform a safe reboot of a locked-up Linux computer. See this post for some historical perspective of the SysReq key.

To check if the CONFIG_MAGIC_SYSRQ option is enable on your Linux kernel, check the configuration file that installed to /boot partition. Do this via:

cat /boot/config-$(uname -r) | grep CONFIG_MAGIC_SYSRQ

If your get “CONFIG_MAGIC_SYSRQ=y", then its enabled on your kernel.

When running a kernel with SysRq compiled in, /proc/sys/kernel/sysrq controls the functions allowed to be invoked via the SysRq key. If the file contains “1“, that means that every possible SysRq request is allowed. See here for more on the /proc/sys/kernel/sysrq.

To actually reboot the machine there is a well know key sequence to follow: REISUB (or REISUO if you want to turn off the system instead of reboot). Basically, if you keep pressed ALT + SysRq + R and then while you keep pressed ALT + SysRq you press E, I, S, U, B with about 1 second between each letter (do not type it fast). Your system will reboot. This is a safer alternative to just cold rebooting the computer.

Mnemonic for REISUB is Reboot Even If System Utterly Broken, and the keys pressed do the following:

R – Switch to XLATE mode

E – Send Terminate signal to all processes except for init

I – Send Kill signal to all processes except for init

S – Sync all mounted filesystems

U – Remount filesystems as read-only

B – Reboot

The magic SysRq key supports more then just the REISUB keys. To see the larger range of thing you can do via Magic SysRq Key’s direct communications with the kernel, check out here.

Building My Linux Box…The Plan

I concluded it was time to retire my current PC (a Dell Dimension XPS 400, Intel Pentium D 820, 2.8GHz w/Dual Core Technology, 2G of memory, purchased in January 2006 for ~ $2,500) and replace it with something better, aka faster. It’s performing poorly, but most of all, I want to do some experimenting with iPython & matplotlib, as well as, GNU Radio & digital signal processing. To do this justice, I need a faster box and ideally loaded with Linux and X Windows.

I also think its time for me to build my own box, instead of purchase it already packaged and assembled. They say you get more for your dollar, or at least you can invest the same money into things that will make a better system (instead of some more crappy speakers, mice, keyboards, etc.). Given I’m building it, I want to pay special attention to getting the performance up. I don’t need the top of the line CPU (I don’t believe you get sufficient value for your money), but I would invest in improving the predominate enemy of computer performance, that is I/O. Also, I want the graphics to be fast and smooth. The work on matplotlib and signal processing is likely to be graphically intensive. I’m not going to be playing games on the system, but I’m going to keep an eye on the gaming communities hardware preferences. I’m willing to go with a good (but not super, and therefore expensive) graphics board.

A fancy sound system isn’t a priority for me. While my interest in GNU Radio & digital signal processing may have some use for a good sound system, I think I could live with the integrated sound system that will come on the motherboard. Like graphics, if it proves unacceptable, I’ll make the investment on another occasion.

Since I’m buying components and assembling it, I want to do some cherry picking. When a vendor sells you an assembled box, they are often using cheaper / less functional components to save them cost. I’m picking an Intel Core i5 CPU (I believe it has the best value for me). Also, I’m going to get a -K Series Intel CPU and motherboard designed for overclocking and provide optimal performance for gamers and high-power users. While I’m not doing gaming, I like the option to tuning the hardware and I have found the -K Series to be only slightly more expensive. For a small amount of additional cash, I get some cool capabilities.

To get the better I/O that I want, I’m going to purchase one solid-state drive (SSD) from which I plan to boot Linux. SSD’s are very much more expensive byte-for-byte when compared to a standard hard drive but boy they can fly! Faster CPU and faster drives are my most valuable investment. With the financial investment in the SSD, I’m not going to purchase new hard drives but reuse the ones I have in my current PC (I’ll need them since the SSD I can budget for will not be large). They are newer then the original Dell box, and besides the hard drive has over 6 years of stuff on it, including a MS Windows environment that I have grown dependent on. That brings me to the next point.

I want to dual boot the system with both MS Windows and Linux. The Linux will be on the SSD, and it is here that I plan to spend much of my time. I want to pick a Linux distribution that is well supported, popular, and a good graphic desktop environment. I grew up on the Linux command line (then it was Unix) and I feel at home there, but I would like to try out the X Windows desktop. If at all possible I want the MS Windows to boot from my current hard drive. I don’t want to have to reload software or copy an image … you never can get it to be the same again. If I must, I’ll make the MS windows drive the default boot as it is now (and Microsoft seems to insist on being default). If I can do this, moving into my new PC will be painless.

With my objectives and priorities fully articulated, lets explore what I intend to build.

Central Processing Unit (CPU)

For my CPU I have picked the Intel Core i5-3570K Ivy Bridge 3.4GHz (3.8GHz Turbo Boost and 6M Cache) LGA 1155 77W Quad-Core Desktop Processor which includes Virtualization Technology (VT-x), and Intel HD Graphics 4000. What a mouth full …. here is what this all means:

- Intel Core – Intel Core (sometimes refereed to as Core 2) is a brand name that Intel uses for various mid-range to high-end consumer and business microprocessors. In general, processors sold as Core are more powerful variants of the same processors marketed as entry-level Celeron and Pentium. Similarly, identical or more capable versions of Core processors are also sold as Xeon processors for the server and workstation market.

- i5-3570K – The very first question after you concluded that your going with the Intel Core line, is Core i3 vs. i5 vs. i7 – Which one is right for me? I’m driven to the i5 since the current Intel Core i5 models are generally considered the best price/performance choice for a gaming system and the i7 do NOT have built-in graphics capability (this would force me to buy a graphics card). Why K versions you ask? Well, the default Ivy Bridge processors are much harder to overclock, where the K series are unlocked and come with tools for overclocking. This processor has overclocked test results running at a stable 4.7GHz.

- Ivy Bridge – Ivy Bridge is the latest generation of processors within the Intel Tick-Tock Development Model. Intel introduced its Sandy Bridge desktop and laptop processors at the start of 2011 as there new micro-architecture …the tock. Intel introduced Ivy Bridge in April 2012 new processor technology … the tick. There is a school of thought that says you shouldn’t buy the last generation CPU because you can get more value (aka performance/dollar) from the previous generation. But the prices I have seen and the comparative reviews have given me the courage to go with the latest generation. Also check out this review with a description of Ivy Bridge.

- Turbo Boost – This feature increases performance of both multi-threaded and single-threaded workloads. Intel Turbo Boost Technology 2.0 allows the processor core to opportunistically and automatically run faster than its rated operating frequency/graphic render clock if it is operating below power, temperature, and current limits. It can boost the frequency up to 3.8GHz.

- 6M Cache – This refers to cache used by the central processing unit of a computer to reduce the average time to access memory. The cache is a smaller, faster memory which stores copies of the data from the most frequently used main memory locations. It comes it three types: L1, L2, and L3. L1 cache (sometimes called primary cashe) is the fastest cache and it usually comes within the processor chip itself. L2 cache comes between L1 and RAM (processor-L1-L2-RAM) and is bigger than the primary cache. The L1 and L2 cache is per core but the last-level cache (L3), is shared among all cores and sometimes call Smart Cache since cache can be dynamically assigned to a core as it needs it. The “6M” refers to the number of bytes of data that the L3 cache can hold.

- LGA 1155 – LGA 1155, also called Socket H2, is an Intel microprocessor compatible 1155 pin socket which supports Intel Sandy Bridge and Ivy Bridge microprocessors.

- 77W – This is the Thermal Design Power (TDP) is the maximum power consumed by the CPU under normal / regular use. In other words, the TDP is the max power a device will dissipate when running real applications. What’s more the TDP is given for graphics card default clocks. TDP is a manufacturer’s data, and thanks to this information, CPUcooler manufactures can size their CPU coolers.

- Quad-Core Desktop Processor – This is a multi-core processor (in fact a quad or 4 core) computing component with four independent actual central processing units (called “cores”). Intel manufacturers the four cores onto a single integrated circuit die (known as a chip multiprocessor or CMP), or onto multiple dies in a single chip package. The proximity of multiple CPU cores on the same die allows it to operation at a much higher clock-rate than is possible if the signals have to travel off-chip.

- Virtualization Technology – VT-x (i.e. x86 virtualization or Vanderpool) is the facility that allows multiple operating systems to simultaneously share x86 processor resources in a safe and efficient manner, a facility generically known as hardware virtualization. With virtualization, you can have several operating systems running in parallel, each one with several programs running. Each operating system runs on a “virtual machine”, i.e. each operating system thinks it is running on a completely independent computer. Note that on the Intel Core technology, the virtual machines are not assigned specific CPUs among the multiple CPUs but are shared by all.

- Intel HD Graphics 4000 – Before the introduction of Intel HD Graphics, Intel integrated graphics were built into the motherboard’s northbridge. HD Graphics 4000 is Intel’s 3rd generation of this integrated GPU technology. The HD 4000 was completely redesigned and offers many improvement. The IPC (instructions per clock) can therefore be even 2x as fast and overall up to 60% more performance should be possible.

One highly desirable feature missing from the Intel i5 line is Hyper-Threading Technology. Hyper-Threading (HT) is a means for improving processor performance by supporting the execution of multiple threads (two is the current limit) on the same processor at once: the threads share the various on-chip execution units. HT is available on the i7 line of processors but I just can’t justify the cost of this additional functionality.

Motherboard (Mobo)

I need to match the CPU to a -K Series motherboard and I picked the Intel Desktop Board DZ77GA-70K with Intel Z77 Express Chipset family which includes Intel High Definition Audio

. The Intel high-definition audio chip allows you to use your computer to send digital audio signals to speakers, headphones, telephones and other audio equipment. Early computer audio systems could only produce simple stereo sound reproduction. The Intel HD audio system supports surround sound up to Dolby 7.1. It supports the usual 32GB of RAM, Smart Response Technology, Smart Connect Technology, Intel Rapid Storage Technology (Intel RST) for RAID 0, 1, 5, and 10. The “GA” in the motherboard’s name means that it contains Bluetooth/WiFi and the a front panel USB 3.0 module (“GAL” means it has no Bluetooth/WiFi). The board supports Intel’s Fast Boot Technology which can dramatically reduce the time to boot the system. Support for high end graphics boards. It has eight USB 3.0 ports (4 external/4 header), ten USB 2.0 ports (4 external (2 Hi-Current/Fast Charging) / 6 internal), four Serial ATA 6.0 Gb/s ports, one eSATA 6.0 Gb/s, four Serial ATA 3.0 Gb/s ports and many other features.

. The Intel high-definition audio chip allows you to use your computer to send digital audio signals to speakers, headphones, telephones and other audio equipment. Early computer audio systems could only produce simple stereo sound reproduction. The Intel HD audio system supports surround sound up to Dolby 7.1. It supports the usual 32GB of RAM, Smart Response Technology, Smart Connect Technology, Intel Rapid Storage Technology (Intel RST) for RAID 0, 1, 5, and 10. The “GA” in the motherboard’s name means that it contains Bluetooth/WiFi and the a front panel USB 3.0 module (“GAL” means it has no Bluetooth/WiFi). The board supports Intel’s Fast Boot Technology which can dramatically reduce the time to boot the system. Support for high end graphics boards. It has eight USB 3.0 ports (4 external/4 header), ten USB 2.0 ports (4 external (2 Hi-Current/Fast Charging) / 6 internal), four Serial ATA 6.0 Gb/s ports, one eSATA 6.0 Gb/s, four Serial ATA 3.0 Gb/s ports and many other features.

The Mobo comes with the Intel Visual BIOS graphical interface and animated controls, which allow you to configure settings faster and take full advantage of your Intel -K processors. The Visual BIOS uses the Unified Extensible Firmware Interface (UEFI) which defines a software interface between an operating system and Mobo firmware. UEFI is meant to replace the Basic Input/Output System (BIOS) firmware interface, present in all IBM PC-compatible personal computers.

The form factor for this Mobo is ATX. ATX (Advanced Technology eXtended) is a motherboard form factor specification developed by Intel in 1995 to improve on previous de facto standards like the AT form factor. There are different form factor of motherboards including microATX, Standard ATX and XL-ATX. This is important to keep in mind when picking a case.

The selling feature for me was that its an Intel product (motherboards are new to me and I need the emotional support), seems easy to setup, it has gotten reasonable reviews (and here is another), and reasonable price. It isn’t most feature full Mobo nor what a die-hard overclocker would buy but it seems a solid, stable product that will not give me any troubles or support problems and will perform well.

Memory (RAM)

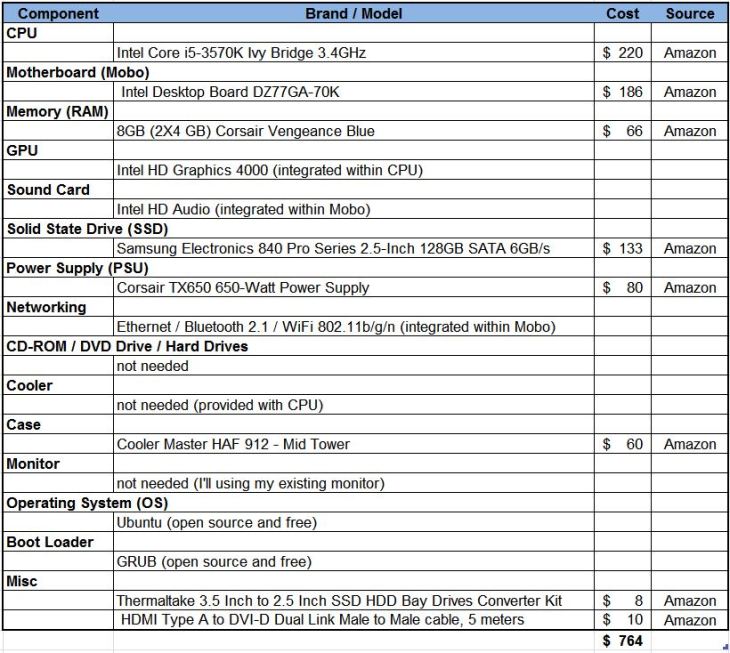

According to the September 2011 Steam hardware survey, 4GB of RAM is currently the most popular configuration among gaming PCs. This may be sufficient for a light home user, however, many power users and enthusiasts find 4GB to be insufficient. Many recommend at least 6GB for any enthusiast PC, especially in light of the relatively

low cost of memory. I’m going with 8GB of RAM in an effort to assure good I/O and 12GB or 16GB just seems like more than I will need. That amount of memory seems sufficient for you average gamer and should work for me. And the reviews I have seen also claim they have successively overclocked this memory.

low cost of memory. I’m going with 8GB of RAM in an effort to assure good I/O and 12GB or 16GB just seems like more than I will need. That amount of memory seems sufficient for you average gamer and should work for me. And the reviews I have seen also claim they have successively overclocked this memory.

I also want to keep open the option to do some overclocking, so I need to consider memory based on Intel’s Extreme Memory Profile (XMP). I also want a memory provider with a solid reparation. The Intel Core i5-3570K processor requires DDR3-1333/1600 memory. With all this in mind, I choose the 8GB (2X4 GB) Corsair Vengeance Blue, 9-9-9-24, 1.5V PC3-12800 1600MHz DDR3 240-Pin SDRAM Dual Channel Memory. They are not top of the line memory but seem a good fit for my needs and have gotten solid reviews.

DDR3 or DDR3 SDRAM, an abbreviation for double data rate type three synchronous dynamic random access memory, is a modern kind of dynamic random access memory (DRAM) with a high bandwidth interface, and has been in use since 2007. The primary benefit of DDR3 over its immediate predecessor (i.e. DDR2), is its ability to transfer data at twice the rate (eight times the speed of its internal memory arrays), enabling higher bandwidth or peak data rates. The next generation, DDR4 expected to be released to the market sometime in 2013. Its primary benefits compared to DDR3 is the higher range of clock frequencies (200MHz vs 166MHz) and data transfer rates (400MT/s vs 333MT/s).

DDR3 memory is classified according to the maximum speed at which it can work, as well as their timings. Timing are numbers such as 3-4-4-8, 5-5-5-15, 7-7-7-21, 9-9-9-24 where lower is better. Memory speed is specified via a number like this: DDR3-xxx / PC3-yyy or DDR3-xxx/yyy. The xxx number indicates the maximum clock speed that the memory chip supports. Therefore, DDR3-1333 can work up to 1,333MHz. Note this isn’t the real clock speed but twice that speed. So the real clock speed of DDR3-1333 is 666MHz. The yyy indicates the maximum transfer rate that the memory can reach. So memory labeled as DDR3-1333/10664 has a transfer rate of 10,664MB/s or 21,328 MB/s if they are running under dual channel mode. Most current boards have dual with the Intel socket 1336 has triple channel.

The memory timings x-x-x-x indicates the number of clock cycles that it takes for the memory to perform something. The smaller the number, the faster the memory. These set of four numerical parameters are called CL, tRCD, tRP, and tRAS. Sometimes there a fifth value which is voltage. Check out Understanding RAM Timings for more information.

Memory is sold in “kits” which are simply multiple single, similar (identical as possible) RAM modules packaged together. The intention is for them to be used in motherboards that have dual and triple (etc.) RAM channel capabilities.

Graphics Processing Unit (GPU)

I’m going to try and live with the on-board graphics processing unit (GPU) integrated with the CPU and invest that money elsewhere. The reviews of Intel’s newest integrated GPU that comes with the i5-3570K (HD Graphics 4000 or HD 4000) have been favorable (also see this). This is the third and latest generation of HD Graphics (now with 16 execution units) and appears to be a real contender to low end graphics cards. If it proves less than acceptable, I’m invest in a graphics board another day.

Sound Card

Here again, I’m not buying a separate card but using the Intel High Definition Audio integrated into the motherboard. Frankly, I’m not sure if this will limit my GNU Radio & digital signal processing objectives but I’ll take the risk. If I’m unhappy for any reason, I’ll buy myself a sound board.

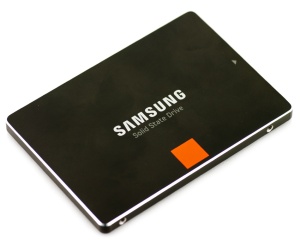

Solid State Drive (SSD)

I have chosen the Samsung Electronics 840 Pro Series 2.5-Inch 128GB SATA 6GB/s for my Solid State Drive (SSD). Samsung has a great repatriation in this space and it has gotten solid reviews.

What most people who use SSDs do (and what I plan to do) is to buy one large enough to hold the OS and applications, and also buy a hard drive to hold the rest of your documents, photos, videos, etc. That’s a good compromise which puts the most performance-critical files on the fastest drive and has the cheapest cost-per-byte for your voluminous data files which typically have much lower performance requirements. But keep in mind that as soon as the amount of data written reaches the stated capacity of the device, the write bandwidth immediately drops. In fact, write bandwidth reduced by up to 70-80% once fully loaded with data and continued to operate under those conditions. Therefore, don’t fill the SSD drive.

Also, one of the most publicized downsides of SSDs is that they have a limited number of writes before they wear out—however, with most newer SSDs, this isn’t actually a problem. Most modern SSDs will become outdated before they die, and you’ll probably have upgraded by then, so there’s not really a huge need to worry about writing to the drive too many times.

Because of the high speed of the SSD, your going to want to use the 6GB/s SATA ports on the motherboard. The standard 3GB/s SATA ports don’t have the throughput, never the less, studies show the SSD still give you benefits.

Power Supply Unit (PSU)

I choose the popular Corsair TX650 650-Watt Power Supply as my PSU. Most computers only consume around 150W, and even a high end computer might consume maybe 200W. That’s why most OEM computer manufacturers put small 250-350W PSUs in their systems. If you look at online reviews of highly overclocked systems with multiple video cards (SLI/Crossfire) they consume at most about 500-600W. I don’t believe I’ll ever approach these levels so this PSU will give me much head room. The review I have read seem to claim that he best way to take advantage of the TX650W’s quiet qualities is to ensure that the PSU intake air does not exceed 30°C often, nor demand more than ~350W DC output. I believe my usage will fit in this sweat spot.

Networking

Networking capabilities are built into the motherboard. The Mobo comes with two Gigabit (10/100/1000 Mb/s) LAN subsystems using the Intel 82579V Gigabit Ethernet Controller. It also has a Bluetooth 2.1 & WiFi 802.11b/g/n module. There appears to have been some troubles with WiFi and Bluetooth module for DZ77GA-70K in 2012, but it has been reported to Intel and hopefully this has been worked out by now. I’ll have to make sure I update the firmware on the board when I get it.

CD-ROM / DVD Drive / Hard Drives

I’m not going to worry about this now. I anticipate loading all my software / data from the Web or transferring from my existing hard drives. Also, I’ll reuse my existing hard drives in this box.

Cooler

The Intel Core i5-3570K comes with a stock cooler. If do over clock the CPU, I’m likely to need a better cooler, but this is fine for now.

Case

Picking a case has been the hardest thing for me to select. I guess this is because its not as much a technical decision but an aesthetic choice. I have narrowed my choose to the Cooler Master HAF 912 – Mid Tower Computer Case with High Airflow Design (19.5 x 9.1 x 18.9 inches ; 17.8 pounds). It has gotten good reviews with the main complaint being that it needs more fans (much room for more installation but only two are provided). The front panel comes with the older USB 2.0 ports but the Mobo comes with a USB 3.0 panel that could be install if desired. The case isn’t expensive but still has a sharp look and seems very versatile in its use and cooling.

Monitor

The Dell LCD monitor I presently have dates back to 2006 and isn’t equipped with HDMI, which is the only way to interface with the Mobo. I presently use my monitor via its Digital Video Interface Digital (DVI-D), but it also has Video Graphics Array (VGA) and Composite Video inputs. So if I wish to continue to use the monitor, I’ll need a converter of some type. I found that the DVI-D to HDMI can be done via an inexpensive cable, so that is the way I’m going. I specifically need a HDMI Type A to DVI-D Dual Link Male to Male cable.

Operating System (OS)

I plan to install Ubuntu Linux on the SSD drive. Picking the Linux distribution was nearly as hard as picking the case. I choose Ubuntu because of its popularity and I wanted to experience its desktop environment once again, GNOME. I used GNOME many years ago when it was very young, I saw potential, and I would like to see how it has grown. I plan to spend the vast majority of my time within Xterm at the command prompt, but I also want to get familiar with Ubuntu/GNOME. I’ll also do most of my systems administration at the command prompt, but again, getting familiarity with Ubuntu would be good to know.

How do I plan to installing Ubuntu, given that I will not have a OS already installed and I will not have a CD-ROM/DVD? Ubuntu does have an ability to be installed via an USB stick.

I’m not going to abandon MS Windows. I have many tools that I use in Windows and its not practical to just abandon them for something else, at least not right now. I would like to dual boot the system with Linux and MS Windows. Ideally, I’ll keep my old Windows image on my current PC’s hard drive, put that drive in my new system, and have the hard drive be my second OS on the bootloader’s chain of operating systems. I know this could be done if I choose to re-install MS Windows and all my applications but I don’t know the challenges I’ll face given I’m using an establish image …. it will be a learning opportunity!

If I’m forces to do a re-install of MS Windows, I might us Oracle VM VirtualBox, which is a x86 virtualization software package. VirtualBox can be installed on an existing host operating system as an application; this host application allows additional guest operating systems, each known as a Guest OS, to be loaded and run, each with its own virtual environment. The typical way of installing a guest operating system is to install it from the ground up. In general, you don’t see VirtualBox running a guest operating system from an existing drive or partition. Never the less, a search of the Web does show evidence that people have made it work this way (1, 2, 3, 4, 5, 6, 7).

The last, and least desirable approach (for my needs) is to use Windows applications is via Wine. Wine (originally an acronym for “Wine Is Not an Emulator”) is a compatibility layer capable of running Windows applications on Linux. Instead of simulating internal Windows logic like a virtual machine or emulator, Wine translates Windows API calls into Linux calls on-the-fly, eliminating the performance and memory penalties of other methods and allowing you to cleanly integrate Windows applications into your desktop. Since it doesn’t create a virtual machine programs perform faster than in a VM. However, you’ll need to test it with your application since it doesn’t support all programs. Also, you’re not running MS Windows, just the MS Windows compatible applications. This is fine if your interest in running Excel standalone, but you can’t perform anything that requires the MS operating system.

Boot Loader

A boot loader is the first software program that runs when a computer starts. It is responsible for loading and transferring control to the operating system kernel software. The kernel, in turn, initializes the rest of the operating system. GRUB (GRand Unified Bootloader) is a boot loader package developed to support multiple operating systems and allow the user to select among them during boot-up. GRUB is often the default boot loader for Linux and is preferred to MS Windows since it makes up for numerous deficiencies while providing full-featured command line and graphical interfaces. GRUB is the default boot loader for Ubuntu, making it an easy choose. GRUB is powerful and complex so check out How I configured grub as the default bootloader on a UEFI Boot systems.

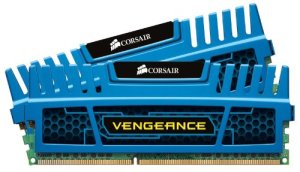

What does it Cost

Now that I have a plan, what will all this cost? I estimate it will be $765, less than one-third the cost of my present system. Granted I’ll be reusing the monitor, key board, mouse, and some drives but this is a substantial price difference for what will be a much more capable machine.

To see what I finally implemented, check out Building My Linux Box…The Implementation.

A Better Mobile Display for the Raspberry Pi

As I described in an earlier post, I run my Raspberry Pi (RPi) as a headless system, using Cygwin/X‘s xterm for command line interaction with the RPi, with my PC being my X server to support any X Window applications. I can move files between the PC and the RPi via my pseudo-Dropbox. I really recommend this configuration and its working perfectly for me.

This configuration gives me a great deal of utility but no mobility …. I’m still tied to my desktop PC. Maybe I should consider replacing the desktop PC with a laptop but I don’t want to spend the money. I have seen some small, inexpensive keyboards and displays that could be connected directly to the RPi, and you could cobble together a mobile unit, or the more elegant Kindle version, but this still doesn’t give me the mobility look & feel I would like.

iPad to the rescue (assuming you have one ….)! I found a great app call iSSH by Zingersoft. Its claims that it is a “comprehensive VT100, VT102, VT220, ANSI, xterm, and xterm-color terminal emulator over SSH and telnet, integrated with a tunneled X server, RDP and VNC client. ” I installed it, configured it quickly, and got a terminal connection to the RPi without reading the documentation …. Impressive since successfully configuring ssh, Xserver, etc. can be challenging sometimes. (Note: The easy of this was largely due to setting up RPi environment properly in the first place. See the earlier post). To top it off, iSSH has a slick look & feel.

Configuring iSSH

Zingersoft’s documentation on configuring iSSH is easy to follow and requires just a few steps. I had no problem getting an terminal session working to the RPi but I did have problems with graphics programs (i.e. X Window client programs). It appears that iSSH’s terminal isn’t really xterm but a terminal emulation (secured via ssh). The iSSH terminal doesn’t use the X server. In fact, while in the terminal session, to see the X server display (i.e. to see graphics applications rendered via the RPi X client) you must hit the button at the top right of the iPad display.

Frankly, the fact that the X application didn’t work the first time wasn’t a big surprise to me. I have been struggling with getting my X Window configuration set up to work reliably for some time. What I was observing was that X would work fine for sometime but at some point I would get the error message “couldn’t connect to display”.

This error is very common and nearly every X user has seen some version of this before. I assume that the right way to solve this was to gain a deeper understanding of X Windows and discover its root cause . I did gain a deeper understanding of X Windows, but a solution to my problem never jumped out from the “official” materials I read. I found the solution when I happened upon the blog “X11 Display Forwarding Fails After Some Time“.

The root cause of my error message is the time out of the X Forwarding. I have been using the -X option when using ssh. This is the more secure option for X Forwarding, but comes at a price, as shown below.

- Using

ssh -X, X forwarding is enabled in “Untrusted” mode, making use of various X security extensions, including a time-limited Xauth cookie. - Use

ssh -Yto enable “Trusted” mode for X, which will enable complete access to your X server. There is no timeout for this option.

So my Display problem isn’t really an error, per say, but ssh timing out on me. To fix this, I added the entry ForwardX11Timeout 596h into my ~/.ssh/config file on my PC. With this problem solved, I continued my journey into X.

My Journey to a Better Understanding of X

Using X Windows for the first time can be somewhat of a shock to someone familiar with other graphical environments, such as Microsoft Windows or Mac OS. X was designed from the beginning to be network-centric, and uses a “client-server” model. In the X model, the “X server” runs on the computer that has the keyboard, monitor, and mouse attached. The server’s responsibility includes tasks such as managing the display, handling input from the keyboard and mouse, and other input or output devices (i.e., a “tablet” can be used as an input device, and a video projector can be an output device, etc.). Each X application (such as xterm) is a “client”. A client sends messages to the server requesting things like “Please draw a window at these coordinates”, and the server sends back messages such as “The user just clicked on the OK button”. These standardized set of messages make up the X Protocol.

The X server and the X clients commonly run on the same computer. However, it is perfectly possible to run the X server on a less powerful computer (e.g. in my case a PC or iPad), and run X applications (the clients) on a powerful machine that serves multiple X servers (or it can be a simple RPi, as in my case). In this scenario the communication between the X client and server takes place over the network (WiFi for my iPad), via the X Protocol. There is nothing in the protocol that forces the client and server machines to be running the same operating system, or even to be running on the same type of computer.

The Display

It is important to remember that the X server is the is the software program which manages the monitor, keyboard, and pointing device. In the X window system, these three devices are collectively referred to as the “display”. Therefore, the X server serves displaying capabilities, via the display, to other programs, called X clients, that connect to the X server. All these connections are established via the X protocol.

The relationship between the X server and the display are tight, in that the X server is engineered to support a specific display (or set of displays). As a user of X, you don’t have any control over this relationship, only the software developer who created the server can modify this relationship (generally speaking). On the other hand, as a user you have free hand in configuring the X protocol connection between the X server and the X clients.

How do you establish a X Protocol connection between any given server and a client? This is done via the environment variable “DISPLAY”. A Linux environment variable DISPLAY tells all its X clients what display they should use for their windows. Its value is set by default in ordinary circumstances, when you start an X server and run jobs locally. Alternatively, you can specify the display yourself. One reason to do this is when you want log into another system, and run a X client there, and but have the window displayed at your local terminal. That is, the DISPLAY environment variable must point to your local terminal.

So the environment variable “DISPLAY” stores the address for X clients to connect to. These addresses are in the form: hostname:displaynumber.screennumber where

hostnameis the name of the computer where the X server runs. An omittedhostnamemeans the localhost.displaynumberis a sequence number (usually 0). It can be varied if there are multiple displays connected to one computer.screennumberis the screen number. A display can actually have multiple screens. Usually there’s only one screen though where 0 is the default.

Setting the DISPLAY variable depends of your shell, but for the Bourne, Bash or Korn shell, you could do the following to connect with the systems local display:

export DISPLAY=localhost:0.0

The remote server knows where it have to redirect the X network traffic via the definition of the DISPLAY environment variable which generally points to an X Display server located on your local computer.

X Security

So you see, as the user, you have full control over where you wish to display the X client window. So what prevents you from doing something malicious, like popping up window on someone else terminal or read their key strokes? After all, all you really need is their host name. X servers have three ways of authenticating connections to it: the host list mechanism (xhost) and the magic cookie mechanism (xauth). Then there is ssh, that can forward X connections, providing a protected connection between client and server over a network using a secure tunnelling protocol.

The xhost Command

The xhost program is used to add and delete host (computer) names or user names to the list of machines and users that are allowed to make connections to the X server. This provides a rudimentary form of privacy control and security. A typical use is as follows: Let’s call the computer you are sitting at the “local host” and the computer you want to connect to the “remote host”. You first use xhost to specify which computer you want to give permission to connect to the X server of the local host. Then you connect to the remote host using telnet. Next you set the DISPLAY variable on the remote host. You want to set this DISPLAY variable to the local host. Now when you start up a program on the remote host, its GUI will show up on the local host (not on the remote host).

For example, assume the IP address of the local host is 128.100.2.16 and the IP address of the remote host is 17.200.10.5.

On the local host, type the following at the command line:

xhost + 17.200.10.5

Log on to the remote host

telnet 17.200.10.5

On the remote host (through the telnet connection), instruct the remote host to display windows on the local host by typing:

export DISPLAY=128.100.2.16:0.0

Now when you type xterm on the remote host, you should see an xterm window on the local host. You should remove the remote host from your access control list as follows.

xhost - 17.200.10.5

Some additional xhost commands:

xhost List all the hosts that have access to the X server

xhost + hostname Adds hostname to X server access control list.

xhost - hostname Removes hostname from X server access control list.

xhost + Turns off access control (all remote hosts will have access to X server … generally a bad thing to do)

xhost - Turns access control back on (all remote hosts blocked access to X server)

Xhost is a very insecure mechanism. It does not distinguish between different users on the remote host. Also, hostnames (addresses actually) can be spoofed. This is bad if you’re on an untrusted network.

The xauth Command

Xauth allows access to anyone who knows the right secret. Such a secret is called an authorization record, or a magic cookie. This authorization scheme is formally called MIT-MAGIC-COOKIE-1. The cookies for different displays are stored together in the file .Xauthority in the user’s home directory (you can specify a different cookie file with the XAUTHORITY environment variable). The xauth application is a utility for accessing the .Xauthority file.

On starting a session, the X server reads a cookie from the.Xauthority file. After that, the server only allows connections from clients that know the same cookie (Note: When the cookie in .Xauthority changes, the server will not pick up the change.). If you want to use xauth, you must start the X server with the -auth authfile argument. You can generate a magic cookie for the .Xauthority file using the utility mcookie (typical usage: xauth add :0 . `mcookie`).

Now that you have started your X session on the server and have your cookie in .Xauthority, you will have to transfer the cookie to the client host. There are a few ways to do this. The most basic way is to transfer the cookie manually by listing the magic cookie for your display with xauth list and injecting it into the remote hosts .Xauthority via the xauth utility.

Xauth has a clear security advantage over xhost. You can limit access to specific users on specific computers and it does not suffer from spoofed addresses as xhost does.

X Over SSH

Even with the xhost and xauth methods, the the X protocol is transmitted over the network with no encryption. If you’re worried someone might snoop on your connections (and you should worry), use ssh. SSH, or the Secure Shell, allows secure (encrypted and authenticated) connections between any two devices running SSH. These connections may include terminal sessions, file transfers, TCP port forwarding, or X Window System forwarding. SSH supports a wide variety of encryption algorithms. It supports various MAC algorithms, and it can use public-key cryptography for authentication or the traditional username/password.

SSH can do something called “X Forwarding” makes the communication secure by “tunneling” the X protocol over the SSH secure link. Forwarding is a type of interaction with another network application, through a inter-mediator, in this case SSH. SSH intercepts a service request from some other program on one side of an SSH connection, sends it across the encrypted connection, and delivers it to the intended recipient on the other side. This process is mostly transparent to both sides of the connection: each believes it is talking directly to its partner and has no knowledge that forwarding is taking place. This is called tunneling since X protocol is encapsulated within the a SSH protocol.

When setting up an SSH tunnel for X11, the Xauth key will automatically be copied to the remote system(in a munged form to reduce the risk of forgery) and the DISPLAY variable will be set.

To turn on X forwarding over ssh, use the command line switch -X or write the following in your local ssh configuration file:

Host remote.host.name

ForwardX11 yes

The current version of SSH supports the X11 SECURITY extension, which provides two classes of clients: trusted clients, which can do anything with the display, and untrusted clients, which cannot inject synthetic events (mouse movement, keypresses) or read data from other windows (e.g., take screenshots). It should be possible to run almost all clients as untrusted, leaving the trusted category for screencapture and screencast programs, macro recorders, and other specialized utilities.

If you use ssh -X remotemachine the remote machine is treated as an untrusted client and ssh -Y remotemachine the remote machine is treated as trusted client. ‘-X’ is supposedly the safe alternative to ‘-Y’. However, as a Cygwin/X maintainer says “this is widely considered to be not useful, because the Security extension uses an arbitrary and limited access control policy, which results in a lot of applications not working correctly and what is really a false sense of security”.

You can configuring SSH via the following files:

- per-user configuration is in

~/.ssh/config - system-wide client configuration is in

/etc/ssh/ssh_config - system-wide daemon configuration is in

/etc/ssh/sshd_config

The ssh server (sshd) at the remote end automatically sets DISPLAY to point to its end of the X forwarding tunnel. The remote tunnel end gets its own cookie; the remote ssh server generates it for you and puts it in~/.Xauthority there. So, X authorization with ssh is fully automatic.

X over SSH solves some of the problems inherent to classic X networking. For example, SSH can tunnel X traffic through firewalls and NAT, and the X configuration for the session is taken care of automatically. It will also handle compression for low-bandwidth links. Also, if you’re using X11 forwarding, you may want to consider setting ForwardX11Trusted no to guard against malicious clients.

The Window Manager

The X design philosophy is much like the Linux/UNIX design philosophy, “tools, not policy”. This philosophy extends to X not dictating what windows should look like on screen, how to move them around with the mouse, what keystrokes should be used to move between windows, what the title bars on each window should look like, etc. Instead, X delegates this responsibility to an application called a “Window Manager”.

There are many window managers available for X and each provides a different look and feel. Some of them support highly configurable virtual desktops like, KDE and GNOME, some of them are lightweight desktop like LXDE which comes with the RPi, or you can operate bare bones (like I am on my PC while using the RPi) and let MS Windows be your Window Manager via Cygwin/X. The iPad’s iSSH can run without a Window Manager. In effect, X server uses the display as it sees fit and your unable to control where things loaded. iSSH does have a Window Manage your can use as an option called dwm. Its a tiling window manager, which is a reasonable way to go given that your pointing device is your finger on the iPad.

X Display Manager

The X Display Manager (XDM) is an optional part of the X Window System that is used for login session management. XDM provides a graphical interface for choosing which display server to connect to, and entering authorization information such as a login & password. Like the Linux getty utility, it performs system logins to the display being connected to and then runs a session manager on behalf of the user (usually an X window manager). XDM then waits for this program to exit, signaling that the user is done and should be logged out of the display. At this point, XDM can display the login and display chooser screens for the next user to login.

In the small world of my RPi’s, a PC, and an iPad, I have no need for an XDM and don’t use one.

Enough of the X Journey … Now in Conclusion

So what does the iSSH give me? I can now sit on the couch, watch TV, and simultaneously login into the RPi with full X Windows support. Some would call this Nirvana but I call it just VERY NICE. The iPad/iSSH combination isn’t the perfect user experience but Zingersoft did a good job.

By the way …. the above iPad screen shot of the X Server display with a globe was rendered using the following code:

#!/usr/bin/env python

"""

Source: http://www.scipy.org/Cookbook/Matplotlib/Maps

"""

from mpl_toolkits.basemap import Basemap

import matplotlib.pyplot as plt

import numpy as np

Use_NASA_blue_marble_image = False

# set up orthographic map projection with

# perspective of satellite looking down at 50N, 100W.

# use low resolution coastlines.

# don't plot features that are smaller than 1000 square km.

map = Basemap(projection='ortho', lat_0=50, lon_0=-100, resolution='l', area_thresh=1000.)

# draw coastlines, country boundaries, fill continents.

if Use_NASA_blue_marble_image:

map.bluemarble()

else:

map.drawcoastlines()

map.drawcountries()

map.fillcontinents(color='coral')

# draw the edge of the map projection region (the projection limb)

map.drawmapboundary()

# draw lat/lon grid lines every 30 degrees.

map.drawmeridians(np.arange(0, 360, 30))

map.drawparallels(np.arange(-90, 90, 30))

# lat/lon coordinates of five cities.

lats = [40.02, 32.73, 38.55, 48.25, 17.29]

lons = [-105.16, -117.16, -77.00, -114.21, -88.10]

cities = ['Boulder, CO', 'San Diego, CA', 'Washington, DC', 'Whitefish, MT', 'Belize City, Belize']

# compute the native map projection coordinates for cities.

x, y = map(lons, lats)

# plot filled circles at the locations of the cities.

map.plot(x, y, 'bo')

# plot the names of those five cities.

for name, xpt, ypt in zip(cities, x, y):

plt.text(xpt + 50000, ypt + 50000, name)

# make up some data on a regular lat/lon grid.

nlats = 73

nlons = 145

delta = 2. * np.pi / (nlons - 1)

lats = (0.5 * np.pi - delta * np.indices((nlats, nlons))[0, :, :])

lons = (delta * np.indices((nlats, nlons))[1, :, :])

wave = 0.75 * (np.sin(2. * lats) ** 8 * np.cos(4. * lons))

mean = 0.5 * np.cos(2. * lats) * ((np.sin(2. * lats)) ** 2 + 2.)

# compute native map projection coordinates of lat/lon grid.

x, y = map(lons * 180. / np.pi, lats * 180. / np.pi)

# contour data over the map.

CS = map.contour(x, y, wave + mean, 15, linewidths=1.5)

plt.show()

Speech Synthesis on the Raspberry Pi

Now that I can get sound out of my Raspberry Pi (RPi), the next logical step for me is speech synthesis … Right? I foresee my RPi being used as a controller/gateway for other devices (e.g. RPi or Arduino). In that capacity, I want the RPi to provide status via email, SMS, web updates, and so why not speech? Therefore, I’m looking for a good text-to-speech tool that will work nicely with my RPi.

The two dominate free speech synthesis tools for Linux are eSpeak and Festival (which has a light-weight version called Flite). Both tools appear very popular, well supported, and produce quality voices. I sensed that Festival is more feature reach and configurable, so I went with it.

To install Festival and Flite (which doesn’t require Festival to be installed), use the following command:

sudo apt-get install festival

sudo apt-get install flite

Festival

To test out the installation, check out Festival’s man page, and execute the following:

echo "Why are you in front of the computer?" | festival --tts

date '+%A, %B %e, %Y' | festival --tts

festival --tts Gettysburg_Address.txt

Text, WAV, Mp3 Utilities

Festival also supplies a tool for converting text files into WAV files. This tool, called text2wave can be executes as follows:

text2wave -o HAL.wav HAL.txt

aplay HAL.wav

MP3 files can be 5 to 10 times smaller than WAV files, so it might be nice to convert a WAV file to MP3. You can do this via a tool called lame.

lame HAL.wav HAL.mp3

Flite

Flite (festival-lite) is a small, fast run-time synthesis engine developed using Festival for small embedded machines. Taking a famous quote from HAL, the computer in the movie “2001: A Space Odyssey”

flite "Look Dave, I can see you're really upset about this. I honestly think you ought to sit down calmly, take a stress pill, and think things over."

Depending how the software was built for the package, you find that flite (and festival) has multiple voices. To find what voices where built in, use the command

flite -lv